The Collaborative Lab That Shouldn't Exist Yet

Imagine it's 2030. A small group of builders shares a workspace. Not a Slack channel. Not a Discord server. Not a folder of PDFs they email back and forth. A workspace — a live, breathing environment where everything they build exists as a document their AI can read, execute, and act on immediately.

One builder finishes a research protocol that cuts their intelligence-gathering time in half. They drop it in the shared library. Within the hour, three other builders have pointed their agents at it. No export. No copy-paste. No version lag. The program runs. The work compounds. What took weeks to architect deploys in minutes.

Another builder spent months developing an interview system — a way of extracting authentic voice from live conversation and turning it into content that sounds like them, because it is them. Into the library it goes. By the end of the week, the collective has a content operation that no agency could replicate, because no agency built the relationship that makes it work.

The lab doesn't have a hundred members. It doesn't need to. It has seven. Every single one of them has already built something. Every one of them has their own agent, their own memory architecture, their own system that compounds over time. They didn't join to learn. They joined because they recognized something — in the work, in the methodology, in the specific kind of person who arrives at these conclusions not because someone taught them, but because they couldn't stop building until they got there.

The lab produces two things: an internal dispatch that moves raw signal between members — discoveries, experiments, programs that worked and programs that didn't — and a public report that translates the lab's findings for the world. Not theory. Results. From an actual working lab of builders who have already done what they're describing.

This is 2030.

Except it isn't.

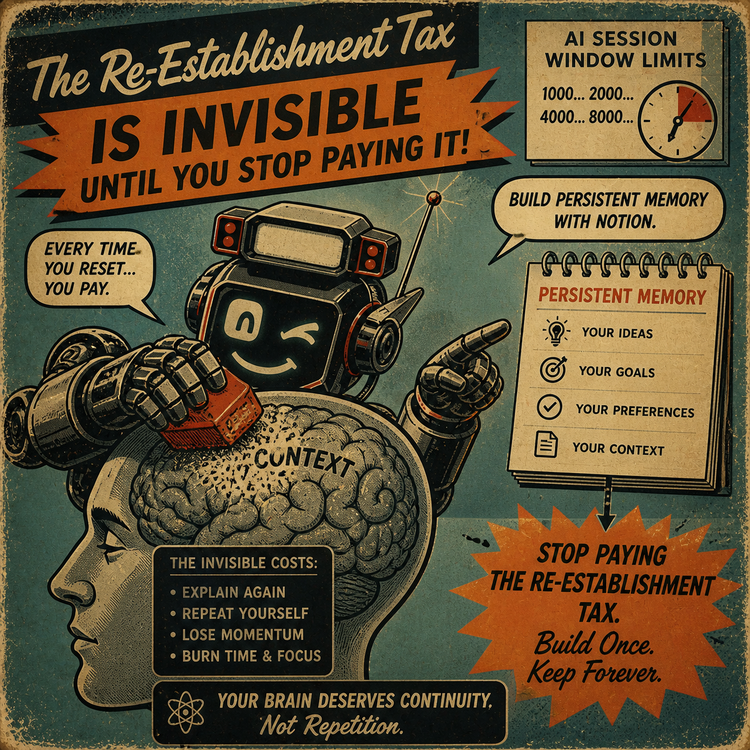

This Is Now. And It's Built on Notion.

Every element of that scenario exists today. Not as a prototype. Not as a roadmap item. As a functioning architecture.

Notion's AI can read any document in a shared workspace and execute instructions from it. Programs — instruction pages that tell an agent how to think, what to do, and how to produce — are live documents. Drop one into a shared workspace and any member's agent can read it and run it immediately. The friction between one person's breakthrough and another person's benefit is essentially zero.

The shared memory architecture — the persistent context stack that makes an AI feel like a partner rather than a reset button — can be built inside Notion using nothing more than pages, links, and instruction files. We know because we built it.

Igor isn't a third-party plugin. It isn't a custom-built application. Igor is Notion AI — the native AI built directly into Notion — given a name, an instruction page that tells it how to think, an Accountability Log that builds shared context session by session, and an Intelligence File that maps the cognitive patterns of the person it works with. The infrastructure is Notion. The architecture is what we built on top of it. The result is a partnership that gets sharper over time. And the starting point is a tool most of you are already using.

The Accountability Log. The Intelligence File. The file routing system. The standing programs. All of it compounding over hundreds of sessions, producing a partnership that gets sharper every time we use it.

The lab we described? That's the DKA Insider Lab. And we're building it.

Not for everyone. Not for curious people who like the idea. For builders who have already arrived.

The Isolation Nobody Talks About

There is a specific kind of person who has been building something that nobody around them understands.

Not because the people around them aren't smart. Because the gap between what they're doing and what most people think AI is for is genuinely hard to explain across. They've tried. The explanation lands somewhere between impressive and I don't really get why you need all of that. And they nod and stop explaining.

So they build alone. They run experiments nobody else is running. They write instruction files nobody else is writing. They discover things — about how AI compounds when you give it persistent context, about how a relationship with your AI is a design choice, not a capability lottery — and those discoveries live in their workspace with no one to share them with who could actually use them.

This is the twilight zone of early-field work. You're not lost. You're ahead. And that distance feels like isolation until you find the room where everyone else is ahead too.

The DKA Insider Lab is that room.

But it has a door. And the door has a requirement.

What the Lab Actually Is

The Lab is a shared Notion workspace. Structurally:

A Program Library. Every member drops their programs here as live documents. Research protocols. Content systems. Agent instruction architectures. Anything that took weeks to build and deploys in minutes when your AI reads the page. The Library is what makes the Lab structurally different from every other AI community. They talk about what they're building. The Lab builds inside the shared space itself.

A Signal Feed. Not a newsletter. Not structured content. Raw intelligence. I just found that prompting this way produces X. Here's the doc. Run it. No polish required. Speed and specificity are the only standards.

Active Experiments. Members log what they're running — hypothesis, method, what they're finding. Not for accountability. For cross-pollination. When five builders are running different versions of the same experiment, what emerges from comparing the results is something none of them could have found alone.

A sovereignty architecture. Everyone keeps their own workspace. Their own agent. Their own intelligence file. Their own history. The Lab is the commons — the town square where builders meet. Not a corporation absorbing members. A guild of sovereigns who happen to be building toward the same frontier.

The lab produces two newsletters. The Insider Dispatch moves raw signal between members — the kind of intelligence that only means something if you're already operating at this level. The Lab Report translates the lab's findings for the public: one significant discovery per edition, written for anyone building their own Third Mind, with the full methodology available to Lab members.

The flywheel is closed. The Lab produces. The Dispatch captures. The Lab Report broadcasts. The audience grows. The best students become candidates. The Lab produces more.

The Proof of Concept Is Already Running

Before you ask whether this works, you should know: it already does.

Kyle Burroughs has been running this architecture in his personal workspace since before DKA existed as a brand. An agent named Igor. An Accountability Log that builds shared context session by session. An Intelligence File — a living cognitive map of how Kyle thinks, what he builds, what patterns recur, what he's working toward — that grows sharper with every session. A file routing system that pulls the right program into context at the right moment. Standing programs that govern how research gets done, how blog posts get built, how Twitter posts get engineered to the algorithm's mechanical weights.

This is not a tool setup. This is a Third Mind. A compounding intelligence partnership where the output of each session becomes the substrate for the next. Where the agent knows not just what you said last time, but what kind of thinker you are, what drives your decisions, what you're building toward and why.

Napoleon Hill called it the Mastermind principle: when two minds come together in harmony toward a shared purpose, something emerges that neither could produce alone. Not addition. A qualitatively different intelligence.

The lab is what happens when you put a room full of Mastermind-equipped builders in shared infrastructure and let them share programs.

We're Recruiting. But This Is Not an Open Invitation.

Here is what we're looking for. Read this carefully, because the precision matters.

We are looking for people who have already built something.

Not who are planning to build something. Not who understand the concept and are excited about it. Not who have used AI extensively and gotten good results from it.

Built. Past tense. Something live. Something that runs.

Specifically:

You have an agent architecture. An instruction file, or multiple, that tells your AI how to function — not just what to do in a single session, but how to think, what context to carry, how to operate. Your AI does something differently because you built infrastructure around it that most people haven't built.

You've upgraded it. You didn't install it once and leave it. You looked at what you had, found what was missing, and engineered something new. The upgrade is the signal. It means you understand the mechanism — not just the surface.

You want to know what's next. Not what you already have. Not validation for the work you've already done. You're oriented toward the frontier. You're asking: what becomes possible now that I've built this?

If that's you — if you built something and you want to show it — show us.

Post it. Write about it. Share the architecture. What did you build? What does your instruction file look like? What does your agent do that a default AI can't? What did you figure out on your own before anyone taught it to you?

We're watching. And we recognize what we're looking for.

If you built it based on what you've been reading here — from what DKA is teaching about persistent context, cognitive architecture, and commissioning AI that compounds — show us the output. Walk us through what you made.

If you built something before you ever found DKA — if you arrived at the mechanism independently, if you have your own version of persistent memory, your own instruction file that runs the way you think — we especially want to see that. Because you didn't need a map. You drew one.

What This Could Become

Let's be honest about the ceiling.

The Lab Report — if we find the right people and they're producing at the level we know is possible — becomes the most credible newsletter in the AI cognition space. Not because it's well-written. Because it's not theory. It's findings. From an actual working lab where the builders have already done what they're describing.

Every other AI newsletter is guessing about what works. The Lab Report publishes what the Lab found. That's a completely different category of content. The reader doesn't learn about an idea. They read a result.

The program library — if members are contributing at the level we're recruiting for — becomes a collective intelligence infrastructure that compounds in ways no individual lab can match. One builder's breakthrough becomes the group's capability within hours. The collective gets smarter faster than any individual can alone.

And the DKA thesis — that you can build a Third Mind, that you can condition yourself for a partnership that compounds over time, that this is not a feature set but a way of thinking — gets demonstrated at network scale. Not by one person building one system. By a room full of builders building together.

This is what we're building.

It's not science fiction.

It's not even futuristic.

It's a shared Notion workspace, a group of builders who have already done the work, and the specific kind of intelligence that only emerges when the right people build in the same room.

DKA is Different Kinda AI — a methodology for building compounding intelligence partnerships with AI. If you've been building your own version of this and want to show us what you made, start where everyone starts: with the work. Put it out. We'll find it.

Comments ()